线性回归策略 优化 案例

回归 就是一个迭代的算法, 不断寻找最优化的 w(权重)值.

- 算法

- 线性回归

- 策略

- 最小二乘法

- 优化

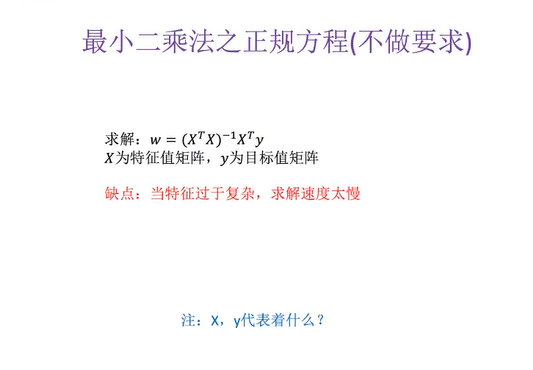

- 正规方程

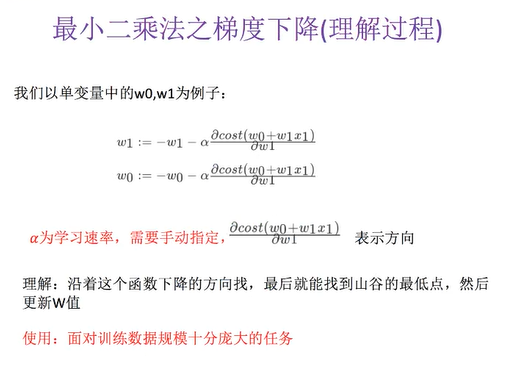

- 梯度下降

损失函数

误差平方和, 也称最小二乘法

正规方程

梯度下降

API

- sklearn.linear_model.LinearRegression

- 正规方程

- sklearn.linear_model.SGDRegressior

- 梯度下降

- sklearn.linear_model.LinearRegression()

- 普通最小二乘线性回归

- coef_: 回归系数(w)

- sklearn.linear_model.SGDRegressior()

- 通过使用 SGD 最小化线性模型

- coef_: 回归系数(w)

正规方程代码

# 波士顿房价数据集

from sklearn.datasets import load_boston

# 拆分数据集

from sklearn.model_selection import train_test_split

# 标准化

from sklearn.preprocessing import StandardScaler

# 线性回归API 包含 正规方程和梯度下降

from sklearn.linear_model import LinearRegression, SGDRegressor

def mylinear():

"""

线性回归直接预测房子价格

:return: None

"""

# 获取数据

lb = load_boston()

# 分割数据集到训练集和测试集

x_train, x_test, y_train, y_test = train_test_split(lb.data, lb.target, test_size=0.25)

# 进行标准化处理 目标值也要进行标准化处理

std_x = StandardScaler()

x_train = std_x.fit_transform(x_train)

x_test = std_x.transform(x_test)

# 目标值

std_y = StandardScaler()

y_train = std_y.fit_transform(y_train.reshape(-1,1))

y_test = std_y.transform(y_test.reshape(-1,1))

# estimator预测

#正规方程求解方式预测结果

lr = LinearRegression()

# 预估器

lr.fit(x_train,y_train)

# 权重参数

print(lr.coef_)

print("测试集里面每个样本的预测价格:",std_y.inverse_transform(lr.predict(x_test)))

return None

if __name__ == "__main__":

mylinear()

运行结果

[[-0.08594658 0.10587429 0.03719096 0.05847954 -0.2470157 0.28119965

-0.0024222 -0.35092234 0.29293498 -0.23515776 -0.22392874 0.11506664

-0.41204222]]

测试集里面每个样本的预测价格: [[23.3318286 ]

[22.16399596]

[21.67644558]

[19.33536702]

[24.47751323]

[26.77945644]

[21.54377775]

[32.48313531]

[18.26394982]

[21.00867349]

[29.58588574]

[ 8.30455961]

[40.53856155]

[25.31741019]

[34.99139631]

[13.78600703]

[20.78370108]

[39.16164366]

[28.8378045 ]

[34.62125104]

[28.41121513]

[21.03824193]

[22.27452833]

[ 4.59955616]

[25.68152117]

[17.79315242]

[22.46099398]

[20.91268362]

[20.50665514]

[23.92939472]

[32.40680933]

[25.5426033 ]

[13.40324077]

[21.59457805]

[19.2864125 ]

[18.75542229]

[34.87036745]

[29.89244112]

[19.1620046 ]

[19.29372608]

[ 7.03352657]

[26.79754178]

[22.65178924]

[28.14309793]

[37.19180311]

[29.95516656]

[31.21062847]

[24.84231878]

[25.59425735]

[26.98568125]

[18.36190949]

[13.38705553]

[20.54778211]

[27.63954021]

[17.60990129]

[12.58452039]

[23.77122144]

[40.44000489]

[16.22625076]

[26.31747844]

[27.68477754]

[28.69660972]

[16.31391408]

[19.32620783]

[28.13167972]

[16.32397872]

[27.04575492]

[14.30923629]

[22.44796508]

[12.51254613]

[12.20288026]

[18.94934656]

[30.21871332]

[13.56752725]

[ 9.64448255]

[21.03023604]

[14.26556247]

[12.31487486]

[31.4957859 ]

[21.51277666]

[22.90965224]

[14.35297587]

[20.55589656]

[30.84403025]

[27.73350924]

[21.34238222]

[17.43684517]

[12.64470511]

[21.34225926]

[16.89654758]

[24.79814847]

[22.59279743]

[23.60654073]

[43.76037958]

[20.36006143]

[17.04017354]

[14.61790428]

[32.27399989]

[40.60350748]

[25.82007422]

[11.10634013]

[31.26398597]

[29.41882519]

[10.47280701]

[18.87541098]

[27.42611329]

[15.98570192]

[20.21293661]

[21.10299278]

[35.72078322]

[36.88291904]

[28.35732167]

[17.0296265 ]

[15.38484874]

[25.7261575 ]

[ 9.30016539]

[18.23809434]

[16.97676445]

[28.79033321]

[18.78944269]

[22.42826536]

[13.92951461]

[24.97012093]

[23.98308515]

[23.78843597]

[29.3725933 ]

[13.94309816]]

评论已关闭